My grandfather used to say, “There’s more than one way to skin a cat.” I always thought it was a disgusting saying, I mean who would ever want to “skin a cat”?! Gross! But the underlying meaning was clear, there’s always more than one way to do something. And no my grandfather never actually skinned any cats, he was just a country boy from a small town in Iowa, where you quite often had to come up with creative ways to get things done.

There are often times when we get so focused on what we are doing, we forget to look around. This happened to me a few weeks ago. We were in the middle of a big upgrade at work, not only where we upgrading our ERP system, we were changing platforms as well (read this as: no one got more than 3-4 hours of sleep per night for about two weeks, including myself). We also have a BI solution that uses SQL Server Integration Services (SSIS) to pull data from our ERP system, stores it in a data warehouse on SQL Server then has a multi-dimensional SQL Server Analysis Services (SSAS) cube that sits on top of the warehouse. Pretty common scenario.

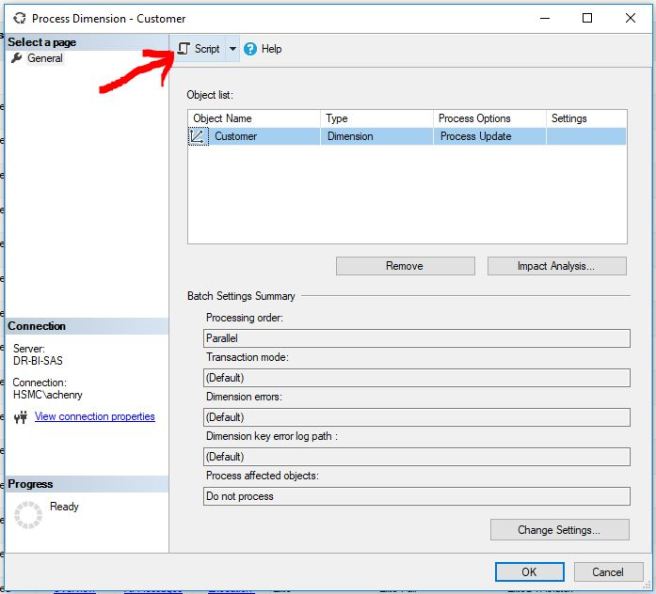

Last part of our ETL process is to process the Dimensions and Facts for the newly loaded data. I have always used the Analysis Services Process Task in SSIS to accomplish this. Works great, it’s stable and easy to use. Just click a few items and you are off and running.

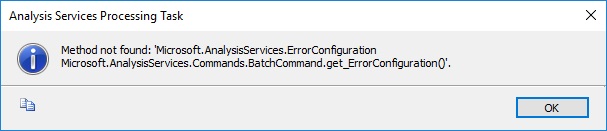

My ETL code had been stable for so long, I hadn’t needed to make any changes since I upgraded from Visual Studio 2015 to Visual Studio 2017. Little did I know that there was a bug in Visual Studio 2017/SSDT 15.5.2 that will not allow you to use the Analysis Services Process Task if your target version of SSIS is 2016. You will get a couple of different errors depending on what you are doing. If you are trying to modify an existing package that uses an Analysis Services Process Task and your target version of SSIS is 2016 you will get the following error:

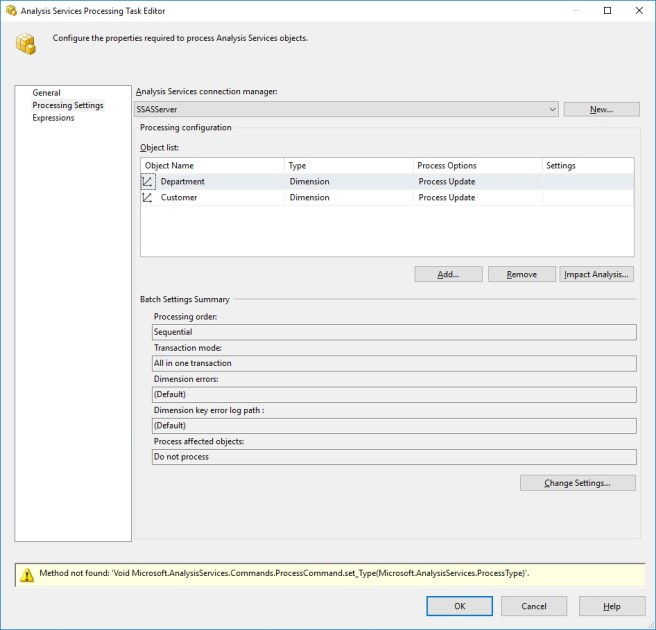

If you try to create a new package using an Analysis Services Process Task and your target version of SSIS is 2016 you will get the following error after it allows you to configure your processing tasks and you click OK:

In both of these scenarios you will not be able to save the package. So what the heck are you supposed to do?! Here’s where my tunnel vision (and panic) sets in. How was I supposed to get my SSAS objects processed?

I could always script out my processing tasks using SSMS and drop them in a SQL Agent job step. But I have multiple environments and multiple cubes so each one would have to be hard coded. Not a great idea, so scratch that.

I could just create a new SSIS project, targeting SSIS 2014, just for the processing tasks. (Thanks to Teo Lachev for the idea. And yes, you can deploy SSIS 2014 targeted packages/projects to SSIS 2016.) It’s better than my first idea. I could parameterize all the connection managers so nothing would be hard coded, but now my code lives in two different projects. Not ideal, but it would definitely be better.

Then Gerhard Brueckl pointed me to the Analysis Services Execute DDL Task.

I did some quick research on it here and turns out, this was exactly what I needed to “skin this cat”. It would allow me to keep my SSAS processing packages in the same project as the rest of the ETL and I could parameterize it to my hearts content (queue beams of light from above and singing angels). Why hadn’t I thought of this before?! To be honest I had never paid attention to this task. It had the term DDL in it, and I wasn’t writing DDL, so why would I ever need it, especially since I was dealing with SSAS. Yes, I know, need I remind you about my lack of sleep and my tunnel vision.

The cool thing about the Analysis Services Execute DDL Task is that you have a choice when it comes to your source for the DDL. I opted for the variable because I needed to parameterize it with the SSAS database name.

I was able to script my processing tasks, dump that code into a variable that I built with expressions so that it could be parameterized. Now one thing to keep in mind is that the XMLA that is produced for SSAS processing tasks has double quotes in it. You will have to either a) escape the double quotes or b) create a variable for the double quote to use in the XMLA in your expression to build your variable. I opted for b, create a variable to hold the double quote, it seemed cleaner to me. Here’s a good post on StackOverFlow on how to do both.

As you can see below, it looks very intimidating with all the XMLA & expressions, but it creates a very pretty XMLA processing task in the end.

I created one task for processing dimensions and one task for processing facts. Mostly because I want to be able to track performance respectively, but you could create one task to do it all.

I was able to add these new packages to my SSIS project and deploy it without issue. They’ve been running ever since with no problems.

I hope this post helps someone else break out of their tunnel vision.

PSA: No cats were harmed in the writing of this blog post.